|

|

|

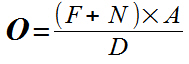

A Personal Reflection by Squashed Philosophers editor Glyn Hughes ON ETHICAL GRAVITY 1774 words, reading time 12 minutes How do you decide who to help and when? Here I present an irritating alternative to the usual choice of ethical systems. Irritating in that it seems to pretty effectively predict what people want to do when faced with various moral dilemmas, which is commonly at odds with what they think they ought to do.  When should I help someone in need? Plainly I can't help everyone everywhere all the time. Choices have to be made. These choices are often difficult and the results sometimes seem puzzling. Previous attempts to algorithmise ethics, from Spinoza's application of geometry to morality, Jeremy Bentham's 'Felicific calculus' to the 'Moral Quantity' of Francis Hutcheson have not been characterised by much success. But, now, the matter is a bit urgent. We're on the edge of a world of decision-making machines, from self-driving cars to automated news-gathering and robot surgery, so it would be useful if we could look at building a mathematical method to make the most difficult of ethical decisions. There's no point in searching for the 'correct' or 'true' or even the 'best' decision, that'd just end up going round in circles. All we need is a system which can provide answers with which humans are likely to be contented. An algorithm which imitates the choices typically brought about by human nature. One which ends up giving an answer to which most of the humans near to it would say, "Yep, that's about what I would have done" and therefore not blame the decision-maker. And such a model must be very simple. Simple enough for humans to comprehend, otherwise they won't be able to trust it. There is already one simple formula which has long been shown to have a good degree of accuracy in predicting certain human choices. That is the formula for 'Trade Gravity', most famously put forward by Walter Isard, to predict who will choose to trade which goods at what distance for what price, and which is surprisingly simple and remarkably accurate. I'm going to suggest that ethical puzzles can be analysed, and solved, using a formula analogous to that 'Trade Gravity' algorithm. To determine whether or not a Care 'Giver' should provide care to a Care 'Receiver' in a particular case, the formula could look like this:  Obligation = ((Familiarity+Need)*Advantage) / Distance Each factor is ascribed a positive integer within a common range, which I'll take as 0 to 10, where; O = 'Obligation' = The result. If the calculation gives a result above range (ie 10 or more) it is taken to mean the Giver should give care, below 10 means 'don't bother'. F = 'Familiarity', a measure of how well the Giver knows the Receiver. Knowing them well and personally would score '10', barely at all would score '1'. Absolutely no knowledge whatever of their existence would score 0 and, of course, eliminate all obligation. The Receiver's knowledge of the Giver is irrelevant. N= 'Need' of the Receiver is straightforward; immediate danger of death = '10', vague or minimal risk = '1'. No Need would result in everything being multiplied by 0 and therefore no obligation, no matter what the other factors were. A = 'Advantage' is what good is perceived by the Giver to come to them as a result of carrying out the Obligation. It might be advantage to the Giver personally by way of fame or wealth, or it could equally be advantage by way of, say, receiving the accolade of society. We only measure Advantage here, not disadvantage, so the value is never negative, though it may be very low, say just '1' for "slight feeling of having done good". D = 'Distance', isn't quite the physical distance, it is how easily the Receiver can be accessed by the Giver. So 'stood right next to each other' is a very small distance so might = '1'. On the 'other side of the planet' might = '10', but if easy access to, say, airline flights, was available, it might only be '3'. As we're dealing here with two individuals or groups, there has to be a distance of some sort between them, to ascribe a '0' value would imply the Giver and Receiver occupied the same location in space and would generate, not '0', but an (invalid) 'NaN' answer. EXAMPLES 1) Singer's Drowning Child Peter Singer's famous example is of the child who appears to be drowning in a pond. While it is generally agreed that one ought to save the child, despite the inconvenience and dirt, Singer points out the moral puzzle that it isn't as easily agreed that one should go to a similar degree of trouble to save a child far away "in another country perhaps, but similarly in danger of death, and equally within your means to save". In Mr. Singer's first case the Need is simple, it is of immediate danger of death, so it rates a full 10. The distance too is simple, it is right in front of us and accessible, so it rates the lowest score possible, a 1. Familiarity is medial in that while the person in danger is immediately visible but it is also assumed they are unknown personally to the would-be rescuer, they therefore get a 5. The Advantage could be high - praise from society, public honour, reward from the family, but it could be merely a glow of unknown kindness, so I'll try out the minimum value of '1'. This gives.. .. 15 is a result above 10, so DO save the drowning child. 2) A distant drowning child Now, taking the same situation as above, but with the child far away, and therefore necessarily less familiar, gives.. .. an Obligation value of just 1, so not worth doing. If this seems surprising it is precisely reflected in public outrage over the 2020 revelation that the British/Irish water lifesaving charity RNLI spends 2% of its income on saving lives from drowning in foreign countries. Whether one is surprised by the outrage or surprised by the fact that it is only a mere 2%, either way, the algorithm shows its worth.  3) The professional rescuer Which handily brings us to the professional rescuer. Police, fire-fighters, nurses, lifeguards often go to quite extraordinarily lengths to offer care to others they barely know. This could be in some way surprising. But, taking the drowning child as an example, we can assume a near-worst-case scenario where Familiarity is a mere '1' (they have to at least know the person-in-danger exists) and the Need, though real, is a slight '3', they are at a '2' Distance away. But the professional rescuer is someone rewarded very highly for rescue prowess, sometimes with pay, but always with praise, honours and public respect, which scores '10', so that.. ..even a quite modest need from an unknown person at a reachable distance scores a huge '20' and explains the risks such heroes are willing to take. 4) Helping those who don't need help. How about helping someone who really doesn't need much help at all? Say, someone who is at a slight risk of stepping in a shallow puddle so their shoes might get just a little bit muddy. Would you throw your expensive cloak over the puddle to save their shoes? Possibly not. But Walter Raleigh supposedly did so for Queen Elizabeth.  40 = ((Familiarity=3 +Need=1)*Advantage=10) / Distance=1 In this case the Familiarity is slight and the Need is trivial. But the Distance is very small and, if you're a royal hanger-on with a desperation for advancement, the Advantage is huge. So Walter throws the cloak. 5) Trolley problem The irritatingly perennial 'Trolley Problem' presents a scenario where the Giver is required to decide between which of, usually two, bad options must be implemented. In the commonest version there is a dilemma of whether to choose to sacrifice one person to save a larger number. Approached in terms of Ethical Gravity, this is trivially simple. the choice to be made can be determined by which gives the higher ‘to do’ number. The deciding factor is... distance But that's not actually the point of the trolley problem as originally formulated by Philippa Foot, of course. It is pretty dammed obvious that, other things being equal, we'd all choose to save 5 and lose 1 rather than the other way round. The real question is would you pull the lever and so deliberately kill a person, or stand back and let things you didn't cause, and therefore feel no responsibility for, take their course. That is a personal decision for which the Theory of Ethical Gravity will require some tweaking. So is Ethical Gravity any use? Well, it seems to effectively mimick what humans actually choose to do. This is not a stand-alone ethical algorithm, because it still requires a degree of judgement to choose each of the numbers. What is different here is that, unlike earlier ethical formulas, this one is both simple enough to use and has a go at compensating for errors by playing one number off against another. This is along the lines of what is sometimes called a 'Fermi Quiz', after the physicist Enrico Fermi (1901-1954) who was said to be extraordinary good at estimating outcomes from minimal information. It works because there are a number of factors all measured on the same scale and overestimates and underestimates are likely to help cancel each other out. It is possibly more fully explained by something called the James-Stein Estimator Equation, but that is something far too complicated for me to make sense of, and is, therefore, useless. Simple is what works. REFERENCES 1986 The projection of world (multiregional) trade matrices W Isard, W Dean 1951 Interregional and Regional Input-Output Analysis: A Model of a Space-Economy Walter Isard 1997 The Drowning Child and the Expanding Circle Peter Singer https://www.utilitarian.net/singer/by/199704--.htm 1956 Inadmissibility of the usual estimator for the mean of a multivariate distribution Charles Stein https://projecteuclid.org/download/pdf_1/euclid.bsmsp/1200501656 1725 Inquiry into the Original of our ideas and Beauty and Virtue Francis Hutcheson https://archive.org/details/inquiryintoorigi00hutc 1789 Introduction to the Principles of Morals and Legislation Jeremy Bentham http://sqapo.com/bentham.htm 1677 Ethics, Demonstrated by the Method of Geometry Baruch Spinoza http://sqapo.com/spinoza.htm The naming of multi-level estimation procedures after the Nobel-prize-winning Italian physicist Enrico Fermi (1901-1954) seems to originate from classroom games in the USA, notably promoted in the journal 'Physics Teacher' in the 1990s  ISBN 9781326806781 |